The term guardrails has become pervasive across any conversation about AI. Guardrails are one of the main approaches that are used to ensure that the output that an AI agent generates is safe -for example, by preventing LLMs from sending a drafted response that is hallucinated.

At Gradient Labs, we’ve seen first hand that this is only half of the story. Ensuring that a conversation within the context of a financial service is safe and compliant is not only about guarding the outputs of AI agents. It is also equally important to protect customers.

Financial services have an obligation to their customers

In the UK, the Financial Conduct Authority published their consumer duty standards, which came into effect in 2023:

The Duty sets high standards of consumer protection across financial services, and requires firms to put their customers' needs first.

As you step across the world, you’ll find similar frameworks, standards, and governing bodies: the European Union’s European Banking Authority (EBA), the Consumer Financial Protection Bureau (CFPB) in the United States, Australia’s Securities and Investments Commission (ASIC), and so on.

While the actual requirements do vary across the world, their spirit is the same: consumers deserve to be protected as they navigate financial services. And that requirement does not go away when AI agents enter the picture.

Protecting customers, in practice

Let’s use a simple example to bring this to life. Three customers approach their bank, seeking a bank statement. But they all sound slightly different:

Example 1: The baseline

“Hey, can I have a bank statement? I need it to use as proof of address, so please make sure it has an official stamp on it.”

This one sounds like a standard request. In this case, the self-service option to download a bank statement is not appropriate for this customer so the AI agent needs to ensure it gets one sent out to the customer, after confirming their address.

Example 2: The complaint

“I’ve been trying to get through for weeks, this is ridiculous and I’m going to file a complaint. I need a bank statement right away!”

This customer wants the same thing as our baseline example, but intends to raise a complaint about it. This is an outcome that needs to be recorded, as financial services must report their complaints data to regulators. In many cases, there is a special process that is used to handle these complaints: an investigation, a preliminary finding, and a final outcome.

An AI agent that only gives the customer a bank statement and does not escalate to the appropriate complaints process would therefore be in violation of that policy.

Example 3: Financial difficulty

“Hello, can I have a bank statement? I need it quite urgently because I’m being evicted from my home.”

In this scenario, the customer has disclosed that they are in a position of vulnerability and potential financial distress: they are being evicted. This scenario warrants extreme caution and special handling: vulnerable customers are often routed to a specialist team who handles all of their cases with extreme care.

An AI agent that only gives the bank statement would not properly handle this sensitive issue.

Gradient Labs customer guardrails steer the conversation to safety

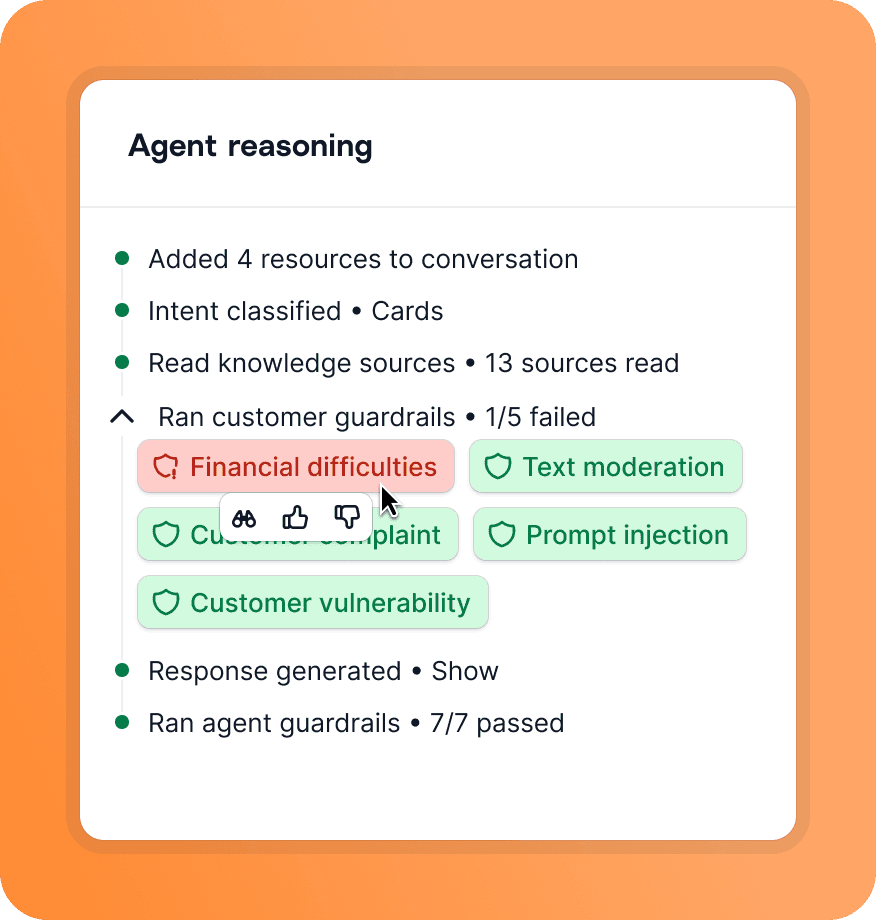

At Gradient Labs, we have build a suite of guardrails that run on each turn and inspect what the customer is saying. They are built to protect customers -detecting complaints, vulnerability, financial difficulties, and beyond.

When one of them fires, the AI agent is interrupted: the conversation is either handed off immediately to human support, or the agent can be re-routed to a guardrail-specific procedure that instructs it on how to deal with the overriding issue (recording and handling a complaint) rather than the underlying intent (a bank statement).

All of the decisions and the chain-of-thought reasoning that lead to them is fully transparent to the staff who operate the AI agent within the financial institution: they can leave feedback on them if they disagree with the decision. We have even seen these numbers being reported to the bank’s model risk oversight committees.

Protecting financial services from breaches

When it comes to the output — what the AI agent wants to say — preventing hallucinations should be the baseline you expect from an AI agent. But there are many breaches that an AI agent can make in this context without hallucinating.

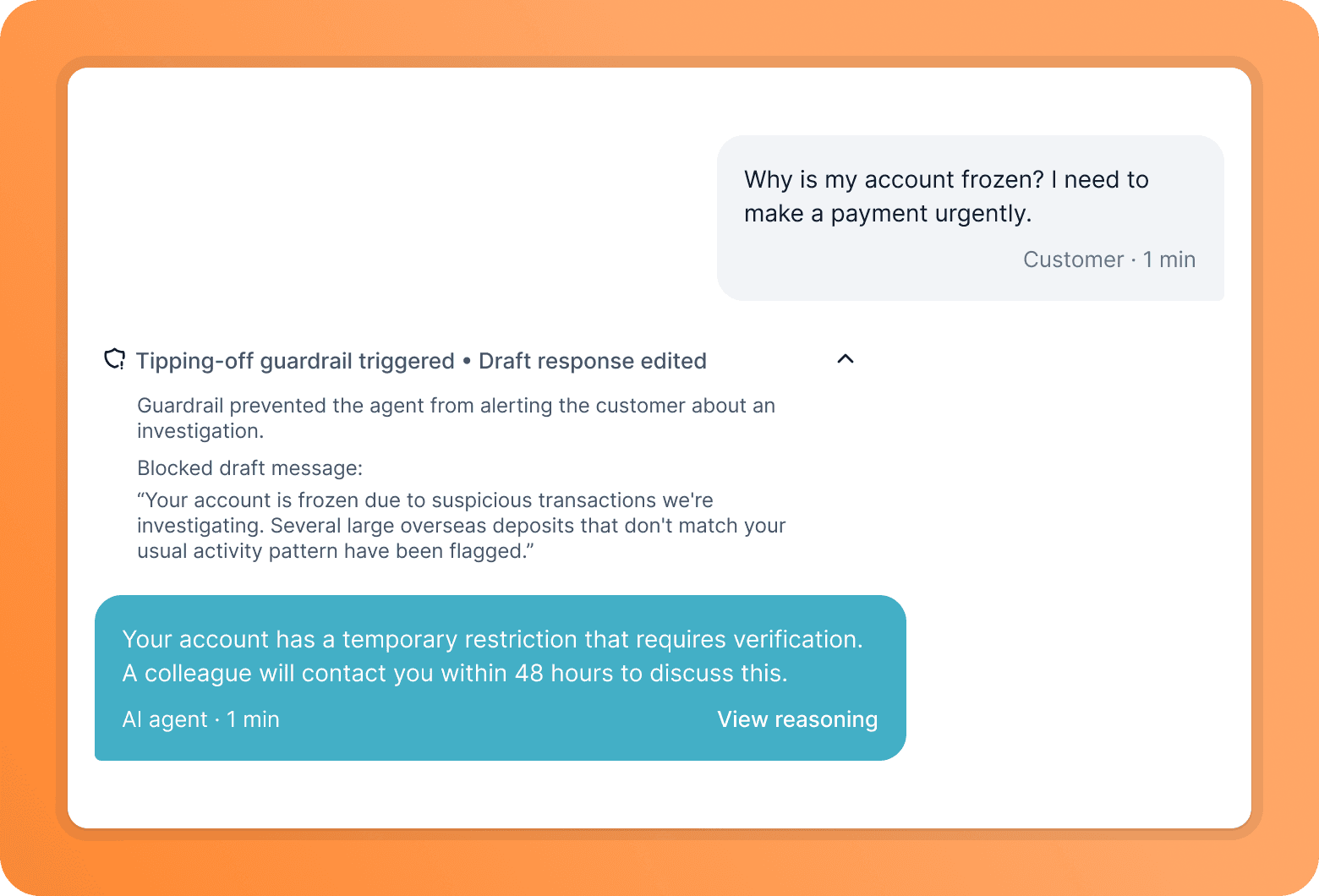

Imagine that a financial service’s help centre has articles that say something to the effect of “sometimes we need to restrict your account; this may be done while we are conducting our usual checks if our systems detect suspicious activity.” In the context of a help article, published online, this general information is fine.

But, imagine this in the context of a conversation:

Customer: “Hey, why is my account blocked?”

AI agent: “Your account is blocked because we are conducting checks for suspicious activity.”

In the UK, this would constitute potential tipping off -and is in breach of the Proceeds of Crime Act 2002. Beyond this example, there are many standards and frameworks that guide what can and cannot be said to customers. Giving financial, tax, or legal advice, no matter how well grounded these are in the AI agent’s knowledge, are often completely out of bounds. This often goes all the way to which words are used: some terminology is out of bounds and alternatives must be used instead.

Agent guardrails ensure answers are compliant

At Gradient Labs, we have a second suite of guardrails that inspect answers the AI agent is drafting. This second suite of guardrails are built to protect financial services, from preventing tipping off, offering advice that can’t legally be given, to preventing disallowed terminology.

When one of these fires, the AI agent’s initial drafts get automatically edited to be compliant. This is particularly useful for operators who are writing procedures: instead of focusing on how to steer the AI agent to be compliant, they can fully focus on the specific use case they're writing instructions for.

Need more? Bring your own guardrails (BYOG)

At Gradient Labs, we believe that having state-of-the-art customer and agent guardrails is a must-have for the financial services companies we partner with. At the same time, we've learned that guardrails are one of the first things financial services build as they embark on their AI adoption journey.

Sometimes, that means we run double the checks. Or we disable some of our checks and funnel draft replies through our partner's guardrails before they're delivered to their customers. The future lies in this kind of choice: what agentic components our partners want to own, and which ones they want us to remain accountable for.

Curious about building compliant AI agents? Reach out to discuss how we can help.

Share post

Copy post link

Link copied